Update Jan 6, 2019: The previously posted PowerShell script had some breaking changes, so both scripts below (one for groups & one for users) have been updated to work with Windows PowerShell version 5.1.

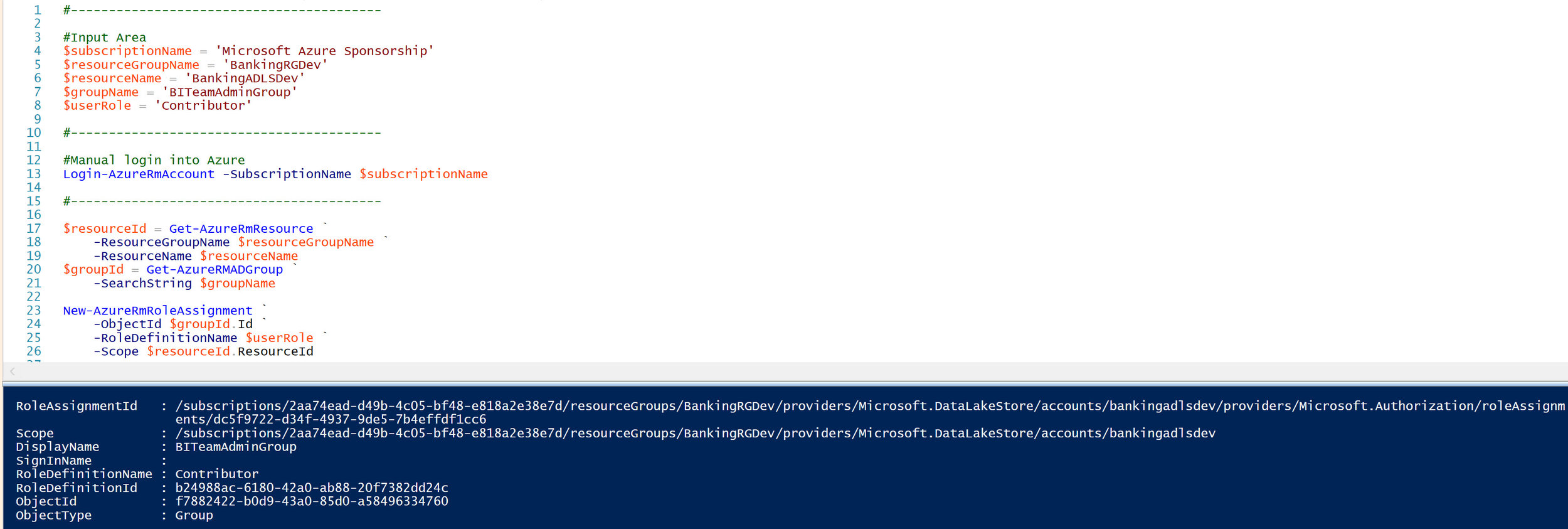

This is part 3 in a short series on Azure Data Lake permissions.

Part 1 - Granting Permissions in Azure Data Lake

Part 2 - Assigning Resource Management Permissions for Azure Data Lake Store

Part 3 - Assigning Data Permissions for Azure Data Lake Store {you are here}

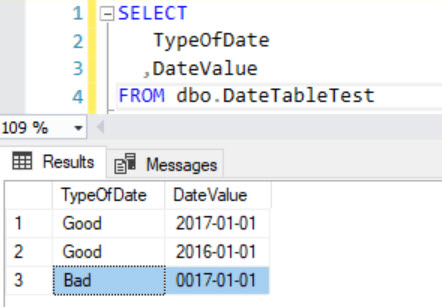

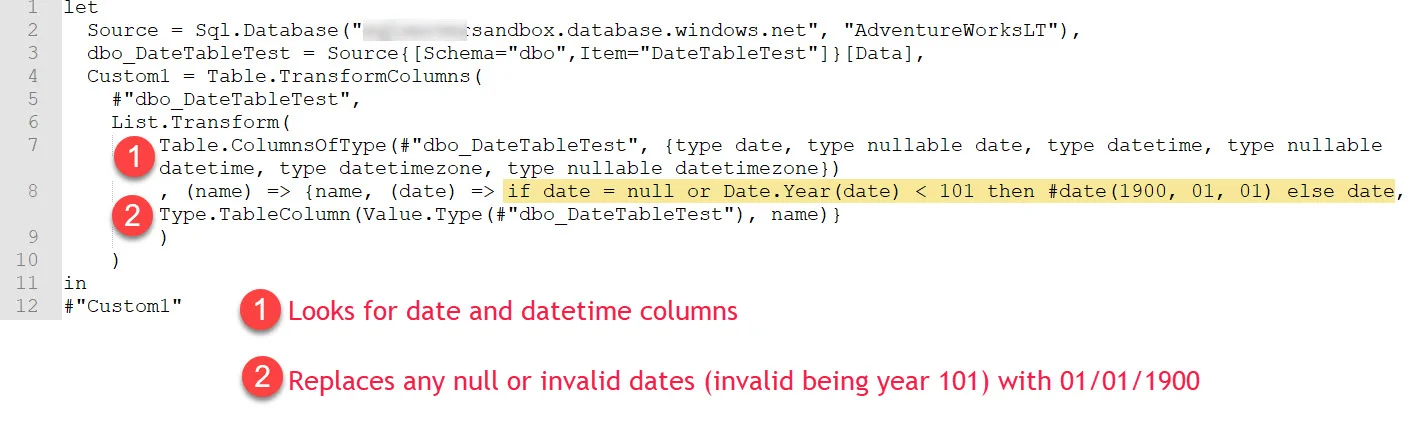

In this section, we're covering the "data permissions" for Azure Data Lake Store (ADLS). The ACL (access control list) grants permissions to to create, read, and/or modify files and folders stored in the ADLS service. Uploading and downloading data falls in this category of ACLs. If you come from the Unix or Linux world, the POSIX-style ACLs will be a familiar concept.

There are two types of ACLs: Access ACLs and Default ACLs.

An Access ACL is the read/write/execute permissions specified for a folder or file. Every single folder or file has its security explicitly defined -- so that means the ADLS security model is not an 'inheritance' model. That is an important concept to remember.

A Default ACL is like a 'template' setting at a folder level (the concept of a default doesn't apply at the file level). Any new child item placed in that folder will automatically obtain that default security setting. The default ACLs are absolutely critical, given that data permissions aren't an inheritance model. You want to avoid a situation where a user has permission to read a folder, but is unable to see any of the files within the folder -- that situation will happen if a new file gets added to a folder which has an access ACL set at the folder level, but not a default ACL to apply to new child objects.

Tips for Assigning Data Permissions for ADLS

Organize your data lake folders and files so that folder-level security is one of the main considerations, and so that security is easier to manage.

Access to raw data is typically highly limited. This is partially due to lack of usability, and partially to ingest data as quickly as possible. Because every single file in ADLS has security properties specified, that is one of several reasons why a large number of very tiny files in ADLS is generally discouraged.

Typically in a data lake, the majority of users need only read+execute rights to consume the data. However, you may also have an area like a 'manual drop zone' or an 'analytics sandbox' where select users have write permissions to create, modify & delete folders and files. Generally speaking, write permissions in a data lake are minimal.

Be selective with granting permissions at the root level ("/"). It does minimize maintenance if you define an access ACL + default ACL at the root level, but only if you feel confident that is adequate security.

Try to use Azure Active Directory (AAD) groups whenever you can to grant access, rather than individual user accounts. This is a consistent best practice for managing security across many types of systems. This will reduce maintenance, and reduce the risk of inaccurate or out of date user permissions.

Currently the maximum # of ACLs that can be assigned to a file or folder is 32. This is another big reason to use AAD groups for managing access, rather than individual users.

Try to assign access at the folder level whenever you can. Although ADLS doesn't have a true inheritance model, you can set a 'default' entry which will handle new child items.

Be aware that changing a default ACL on a folder doesn't automatically propagate to change the default ACL on any existing child folders. So, managing changes to existing data needs to be done carefully. Although it can seem like the default ACLs act like an inheritance model in some respects, it definitely is not.

Grant access to an AAD application (aka service principal identity or SPI) for automated operations, such as data loads. For service principals, you often can assign just the data permissions (the ACL) and not any permissions to the ADLS service (the RBAC). Check Part 4 for more discussion about service principals.

You almost always want to assign read + execute permissions together. The execute permissions allow a user to traverse the folder structure to where a file resides, which is needed in conjunction with the read (or write) permissions for the actual file.

The portal interface makes it easy to apply permissions to existing child-level folders and files. It's is a little harder to specify via scripting methods since your script will need to explicitly be set up to handle recursive operations. Therefore, try to assign relevant permissions as early as possible in your design/development/operationalization phase.

When you set permissions on existing data, it can take a little while if you are asking it to recursively traverse the folders and files to set permissions for every object. This is another reason to try to set permissions at the AAD group level, rather than via individual users.

The PowerShell cmdlets to manage ADLS changed in January 2018. See this post: Breaking changes to Azure Data Lake Store cmdlets.

Defining ADLS Data Permissions in the Azure Portal

In my example, I want to assign read + execute permissions for the StandardizedData folder, but not for the RawData folder. In the portal, I open Data Explorer, navigate to the applicable folder which sets the "scope" for the permissions, then the Access button:

Click the Add button to select a user or a group. Notice the permissions are read/write/execute. You can have the new permission entry add to all existing child folders & files (which you typically want to do). The last radio button is really important - this lets you set it as both an access entry *and* the default entry.

Important! When using the web interface as shown above, you need to leave the blade open while it assigns permissions. If you navigate away and close it before it completes, the process will get interrupted.

Defining ADLS Data Permissions via PowerShell Script

The technique shown above in the portal is convenient for quick changes, for learning, or for "one-off" scenarios. However, in an enterprise solution, or a production environment, it's a better practice to handle permissions via a script so you can do things such as:

Promote changes through different environments

Pass off scripts to an administrator to run in production

Include permission settings in source control

The two scripts below (option 1 is for groups; option 2 is for users) are split into 4 sub-steps. You might not need all these steps (for instance, if you want to specify permissions at the root level rather than at a subfolder level which is done in the example below).

Important! Definitely make sure to assign the default entries as well as the access entries or you’ll run into problems (the default entries are part of step 4 below). If you were to accidentally set an access entry for a folder without any sort of default entry, new files which are added to the folder won’t have permissions (thus making the files ‘invisible’ to users).

Option 1 - Permissions at the Group Level (recommended)

#This script sets ADLS data-level permissions for one or more AAD groups.#It is a basic ad hoc script (without error handling, etc).#Script last tested with PowerShell version 5.1 on Jan 6, 2019.#-----------------------------------------#Input Area#-----------------------------------------$subscriptionName = 'Microsoft Azure Sponsorship'$dataLakeStoreName = 'bankingadlsdev.azuredatalakestore.net'$groupNameArray = @('DataReaderGroup','DataWriterGroup')$adlsPermissionType = 'Group'$adlsTraversePermission = 'ReadExecute'$adlsPathPermission = 'All' #All = Read,Write,Execute$adlsRoot = '/'$adlsPath1 = '/ATMMachineData'$adlsPath2 = '/ATMMachineData/StandardizedData'#-----------------------------------------#Manual login into Azure#-----------------------------------------#Login-AzureRmAccount -SubscriptionName $subscriptionName#-----------------------------------------#Step 1: Set root permissions to traverse#-----------------------------------------Write-Host '[*] Begin setting the root permissions to traverse (execute only).'ForEach ($groupName in $groupNameArray) { $groupId = Get-AzureRmADGroup -DisplayName $groupName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsRoot ` -AceType $adlsPermissionType ` -Permissions $adlsTraversePermission ` -Id $groupId.Id Write-Host "[*] Complete setting root permissions for $groupName." }#-----------------------------------------#Step 2: Set parent folder permissions to traverse#-----------------------------------------Write-Host '[*] Begin setting parent folder permission to traverse (execute only).'ForEach ($groupName in $groupNameArray) { $groupId = Get-AzureRmADGroup -DisplayName $groupName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsPath1 ` -AceType $adlsPermissionType ` -Permissions $adlsTraversePermission ` -Id $groupId.Id Write-Host "[*] Complete setting parent folder permissions for $groupName." }#-----------------------------------------#Step 3: Set ACLs recursively#-----------------------------------------Write-Host '[*] Begin setting the ACL (All permissions set recursively on all child items).'ForEach ($groupName in $groupNameArray) { $groupId = Get-AzureRmADGroup -DisplayName $groupName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsPath2 ` -AceType $adlsPermissionType ` -Permissions $adlsPathPermission ` -Id $groupId.Id ` -Recurse ` -Concurrency 128 Write-Host "[*] Complete setting ACLs for $groupName." }#-----------------------------------------#Step 4: Set default entries recursively#-----------------------------------------Write-Host '[*] Begin setting the default entry so any new items under this path will obtain the access.' ForEach ($groupName in $groupNameArray) { $groupId = Get-AzureRmADGroup -DisplayName $groupName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsPath2 ` -AceType $adlsPermissionType ` -Permissions $adlsPathPermission ` -Id $groupId.Id -Default ` -Recurse ` -Concurrency 128 Write-Host "[*] Complete setting default entries for $groupName." }Option 2 - Permissions at the User Level

#This script sets ADLS data-level permissions for one or more AAD users.#It is a basic ad hoc script (without error handling, etc).#Script last tested with PowerShell version 5.1 on Jan 6, 2019.#-----------------------------------------#Input Area#-----------------------------------------$subscriptionName = 'Microsoft Azure Sponsorship'$dataLakeStoreName = 'bankingadlsdev.azuredatalakestore.net'$userNameArray = @('WayneWriter@sqlchick.com','RayReader@sqlchick.com')$adlsPermissionType = 'User'$adlsTraversePermission = 'ReadExecute'$adlsPathPermission = 'All' #All = Read,Write,Execute$adlsRoot = '/'$adlsPath1 = '/ATMMachineData'$adlsPath2 = '/ATMMachineData/StandardizedData'#-----------------------------------------#Manual login into Azure#-----------------------------------------Login-AzureRmAccount -SubscriptionName $subscriptionName#-----------------------------------------#Set the data / POSIX/ ACL permissions#-----------------------------------------Write-Host '[*] Begin setting the root permissions to traverse (execute only).'ForEach ($userName in $userNameArray) { $userId = Get-AzureRmADUser -UPN $userName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsRoot ` -AceType $adlsPermissionType ` -Permissions $adlsTraversePermission ` -Id $userId.Id Write-Host "[*] Complete setting root permissions for $userName." }Write-Host '[*] Begin setting the parent folder permission to traverse (execute only).'ForEach ($userName in $userNameArray) { $userId = Get-AzureRmADUser -UPN $userName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsPath1 ` -AceType $adlsPermissionType ` -Permissions $adlsTraversePermission ` -Id $userId.Id Write-Host "[*] Complete setting root permissions for $userName." }Write-Host '[*] Begin setting the ACL (All permissions set recursively on all child items).'ForEach ($userName in $userNameArray) { $userId = Get-AzureRmADUser -UPN $userName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsPath2 ` -AceType $adlsPermissionType ` -Permissions $adlsPathPermission ` -Id $userId.Id ` -Recurse ` -Concurrency 128 Write-Host "[*] Complete setting root permissions for $userName." }Write-Host '[*] Set the default entry so any new items under this path will obtain the access.'ForEach ($userName in $userNameArray) { $userId = Get-AzureRmADUser -UPN $userName Set-AzureRmDataLakeStoreItemAclEntry ` -AccountName $dataLakeStoreName ` -Path $adlsPath2 ` -AceType $adlsPermissionType ` -Permissions $adlsPathPermission ` -Id $userId.Id -Default ` -Recurse ` -Concurrency 128 Write-Host "[*] Complete setting root permissions for $userName." }Finding More Information

PowerShell Cmdlets for Azure Data Lake Store

Breaking Changes to Data Lake Store Cmdlets

Access Control in Azure Data Lake Store <--Definitely take time to read this